Hi All,

Does input / ouptut phase jitter (?) changes with the block size of audio transfer framework?

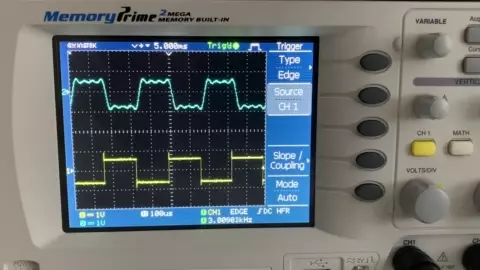

You can see the input / output jitter characteristic changes with the block size. I’m applying 3kHz square wave to codec input (yellow line on the scope) and probing the the codec output directly (blue line on the scope)

I’m triggering the scope on the codec output and watching the relationship between input and output signals. Phase delay between input / output should stay stable but it jitters.

Test code is below. I’ve run the test with different SetAudioBlockSize parameters. Higher the block size, bigger the jitter it seems.

#include <string.h>

#include "daisy_seed.h"

using namespace daisy;

DaisySeed seed;

static void Callback(float *in, float *out, size_t size)

{

memcpy(out, in, size * sizeof(float));

}

int main(void)

{

seed.Configure();

seed.Init();

seed.SetAudioBlockSize(512);

seed.StartAudio(Callback);

while(1) {}

}

I’m using the latest DaisyExamples repo as of now.

Thanks.

Here you can see my hardware setup as well.

Nothing fancy. I’m applying the test signal and probing input and output.

I’m not sure this jitter even matters but It seems easy to reproduce and interesting. So I’m asking is there any technical explanation behind this.

Thanks again.

I am a total noob with Daisy, so I can’t help you. I have to learn the tools, the libraries, and a little C++ first.

But I am a EE and audio electronics guy, and I can safely say yes this totally matters. No quality audio system should work like this. I hope there is a simple and easy answer for you.

Frankly, I am surprised and a little dismayed that you haven’t gotten any response to your post. I know that I will be in a similar position (needing help) once I receive my Pod and try to get it up and running. I hope that E-S and the Support Forum can provide some support!

There’s also no official response from Electrosmith about the annoying 1khz buzz, though users have some workarounds.

On the topic of jitter, I’m curious whether it’s on input, output or both. The original post is about a digital pass through, so it’s not obvious.

Yes, for serious HiFi work, jitter is bad. It’s arguably less important for synths, effects, etc.

I suspect the jitter is on the output because changing the “BlockSize” in the firmware can’t affect the signal source. I believe the signal source is solid but the “digital pass through” version has this delay jitter and the amount changes with different block size arguments.

The experiment is easily reproducible, and I’m wondering about an official response.

Thanks.

I am suspecting now that the jitter is in your source. Even the duty cycle of the input square wave is not solid. Are you using a real signal generator for the input?

Since it looks like you have a good scope, you can test the jitter of the input itself. Trigger on your input square wave, then crank your trigger delay to the left by several milliseconds. This way, you are looking at the input milliseconds after the trigger - in a way, simulating the buffer delay of your Daisy. If it jitters like in your video, then it is your source, not the Daisy.

Thanks for the suggestion!

No, I’m not using a ‘proper’ signal generator. I’m using a self made FPGA based signal generator but system clock of the FPGA itself has jitter it seems. Thanks for helping me troubleshoot.

I arranged the scope to trigger on the input, then gave a 5ms horizontal delay.

… and apparently my input signal has this jitter originally.

So,

I’ll do this test again after I fix my signal source but daisy framework is most probably OK with no problems.